AI is viable when the economics of delegation work

AI starts to make business sense when work can be delegated clearly, checked quickly, and contained safely when something goes wrong.

By Tim Pickup4 min read

Tim Pickup

Founder, Fizzcube

4 min read

AI creates the most value where work can be delegated well.

Most companies do not have a model problem. They have a delegation problem. AI can produce work. We are well past that argument. The harder question is whether the work can be handed off, checked, and trusted cheaply enough to matter.

A lot of organisations are still running pilots, internal experiments, and one-off use cases. That is fine as a starting point. But pilots are not the same thing as operational value. The companies getting real returns tend to do something less glamorous: they pick a few workflows where delegation works, measure them properly, and expand from there.

McKinsey reports that 88 percent of organisations now use AI in at least one business function, but only about one-third say they are scaling it across the enterprise, and just 39 percent report any enterprise-level EBIT impact from AI. That gap matters. Access to AI is no longer the issue. Making it pay is.

Source: McKinsey – The State of AI

The economics of delegation

The business test is simpler than most AI strategy decks make it sound.

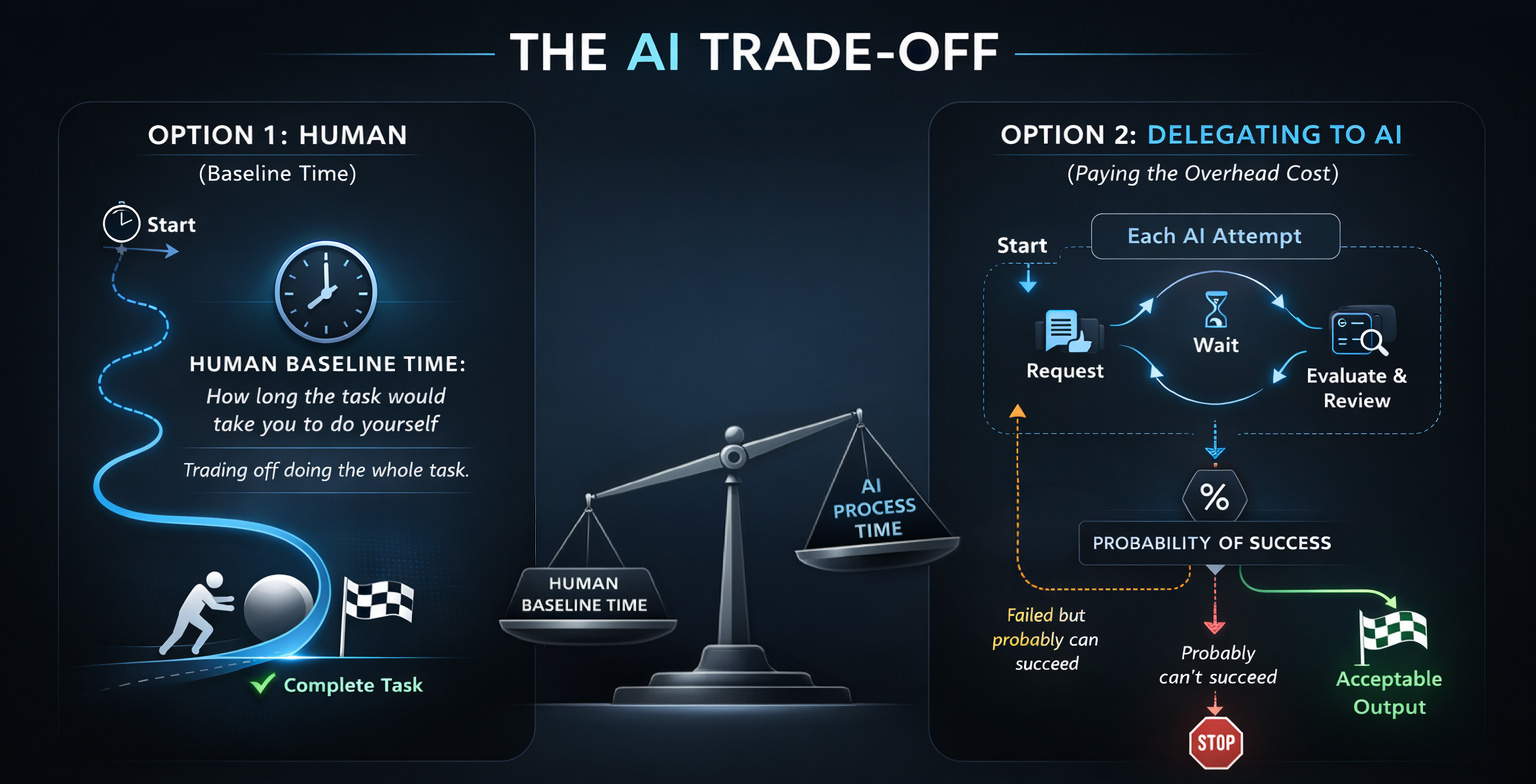

AI is useful when the time it saves is greater than the cost of review, rework, and risk.

If a task takes thirty minutes by hand, and AI produces a decent draft in seconds that needs twenty minutes of review, you may still come out ahead. If the task takes five minutes without AI and ten minutes to verify with it, the maths falls apart.

The same logic gets stricter when mistakes are expensive. Legal exposure, compliance errors, reputational damage, and customer harm all raise the bar.

That is why leaders should spend less time obsessing over generation and more time on evaluation.

The early promise of AI was speed. The management problem is deciding when that speed turns into work you can actually rely on.

In practice, AI delegation works best when four conditions are in place: the outcome is clear, the work can be checked quickly, the person reviewing it understands the domain, and success can be measured over time.

Clear outcomes

AI does better when the target is obvious. A clear brief, a defined format, and a shared idea of what "good" looks like all make a difference.

The organisations seeing the best results are not just dropping a chatbot into an existing process and hoping for the best. They are redesigning the workflow around delegation. McKinsey's research suggests high performers are much more likely to rethink how work is structured before they automate it.

That usually means the boring stuff matters most. A good brief. A usable rubric. A definition of done that everyone can see. Those things matter more than clever prompting.

Cheap verification

The best AI use cases are often not the flashiest ones. They are the ones where a human can check the work quickly.

That usually includes first drafts, summaries, structured research, internal analysis, reporting prep, proposal drafting, and routine customer communication. In those cases, mistakes are usually visible and relatively cheap to fix.

The opposite is also true. Once you move into legal judgement, financial exposure, or regulated decisions, human oversight has to get much heavier.

AI can help with drafting, suggesting improvements, and running basic checks against a rubric. That is useful. It is not the same as accountability.

AI critique can support governance. It cannot replace it.

Domain expertise

One pattern shows up again and again: AI works better when the person using it already understands the field.

Domain knowledge lowers the cost of review. Someone experienced can spot shaky assumptions, weak reasoning, or nonsense dressed up as confidence. Someone without that background is much easier to fool.

Research from Harvard Business School on BCG consultants using GPT-based systems makes the point well. On tasks inside the model's capability frontier, consultants worked faster and produced better results. On a task outside that frontier, the people using AI were much more likely to get the answer wrong.

Harvard Business School – Navigating the Jagged Technological Frontier

For leaders, that is the important bit. The bottleneck is often not the model. It is whether the manager or operator can tell if the output is correct and safe to use.

Deep domain expertise becomes more valuable in an AI-heavy world, not less.

Measurable success rates

Another useful shift is to stop thinking about AI in yes-or-no terms. It does not need to be perfect to be useful.

What matters is the success rate.

OpenAI introduced a benchmark called GDPval to measure how well frontier models perform on real-world, economically valuable tasks across different occupations. On a meaningful share of tasks, model outputs were rated comparable to or better than expert-produced work.

That does not mean whole roles are ready for automation. It does suggest that plenty of workflows can benefit from a strong first pass that reduces the amount of expert effort needed afterwards.

So the question is not really "Does AI work?" It is "Does it work often enough to justify controlled deployment?"

When to proceed and when to stop

AI adoption should be run on operating metrics, not gut feel.

A workflow is worth keeping only while a few indicators move in the right direction.

Cycle time should come down.

First-pass acceptance should go up.

Exception rates should stay manageable.

Commercial impact should start to show up.

If those numbers stall, the workflow probably needs redesigning or shutting down.

A lot of AI initiatives fail because they are judged on a slick demo instead of measurable results. Fluent output can look like progress long before the economics actually work.

That is why disciplined evaluation matters. It stops teams from scaling workflows that were never viable in the first place.

The management discipline

The practical takeaway is straightforward.

AI creates the most value where work can be delegated clearly, checked quickly, and contained safely when something goes wrong.

That pushes the conversation away from model selection and back toward management design.

The companies that win here will not necessarily be the ones running the most experiments. They will be the ones that know which workflows can be delegated, how to measure them early, and when to stop pushing automation into places it does not fit.

That is the real discipline behind AI adoption.

Not asking whether the technology is impressive.

Asking whether the economics of delegation work.